20 Tips on How to Improve the UX of Your Application - Extensive Guide (Updated)

Some stats to start with

Neglecting user experience translates to lost opportunities to convert users into customers, with a very real impact on the bottom line. Not only this, but companies which do not invest in UX risk being left behind by the ones that do.

- Walker’s ‘Customers 2020’ report revealed that, by 2020, customer experience will overtake price and product as the key brand differentiator.

- According to a report by Forrester, sites with a “superior user experience” can see visit-to-lead conversions up to 400% higher than those without.

- A study by leading research and consulting firm Temkin Group revealed that 84% of companies expect to increase their focus on customer experience measurements and metrics.

How can you improve UX of your app?

1. UX research

UX research is a way of discovering users needs, their behavior, and their expectations. A product built with the support of data can be perfectly targeted to its audience. And that makes the success of a project more realistic.

The main focus of both UX design and UX research is creating user-centered products. That can be achieved through different techniques and methods. However, the data collected during UX research is an essential foundation.

Benefits of UX research for the business and the user

- Saving money. The knowledge and information gained from research help you create a product that is desired and aligned with the market. This means that you can avoid spending money on developing and pursuing bad ideas.

- The possibility to make improvements and adjustments during the development stage. Your product does not have to go live for you to get feedback and implement new or different solutions. You can do that in the early stages and put out a fully polished and finished product.

- The users get what they want. As simple as it seems, it is not that obvious. How many times did you struggle with buying something online or did not know how to use an app, got frustrated, and quit? This should not happen with a well-made UX design. The process of using an app or website should be as smooth and as convenient as it can be.

How to prepare for UX research?

What is important is to realize what has already been done and what kind of questions you want answered. The methods chosen and decisions taken can depend on the stage of the development or the type of the project.

Thus, before UX research begins, you should gather as much information as you can about your company, your competitors, the users etc. With all that in your mind, pick some routes that UX research could go and decide where it should lead you.

UX research can be done during various stages and bring different insights.

- Research is most valuable and has the most impact at the beginning of the project. It will prevent you from making big changes later on during development, when they can be difficult and expensive to implement.

- However, research can also be beneficial when the project is already underway. Projects differ from each other and doing analysis while the undertaking is already ongoing can make sense in some situations. This method will ensure that design is still on the right track. It might also uncover some new issues that were not predicted or noticed earlier and need to be solved before the launch.

UX research methods

- Desk research, i.e. gathering knowledge on what was already done in the past, is very important. It gives you insights about the market and the users, and saves time that would otherwise be spent on answering already answered questions.

- Expert evaluation is a method where a UX expert reviews a digital product and discovers potential usability issues that could affect user experience.

- Competitors analysis determines the product’s competitors’ strong and weak points. It helps to build a better strategy for the product and gain the leading position on the market.

- In-depth interview (IDI) is a structured interview run by a UX researcher with potential users. The goal of this kind of research is to verify user behaviors, attitudes and needs.

- Behavioral data analysis is a research method based on observing and analysing user behavior. behavioral data analysis shows what actions people actually perform rather that what they declare they would do.

- Heatmap analysis shows how users performed while using the product through tracking of mouse movements, clicks, and eye movements.

- Product idea validation is a method used before any design and implementation work. It is run in order to identify if the product idea has a chance of succeeding on the market.

Research methods can be fitted to the project depending on the budget, stage of a product development, time limit or any other specific need.

Also, every designer works in their own style and might have their favourite solutions. Flexibility and adaptability are key to success – be creative with it! Do not hesitate to create or adapt already established tools to your specific needs.

2. Desk research

Desk research, or secondary research is all about gathering useful information from studies that have already been done by others. This means that, instead of starting from scratch, you interpret, collate, and analyze existing data sets.

Desk research is a well-established practice in business and scholarship, in part due to its potentially huge ROI. After all, it is much less resource-intensive to make use of things that are already out there, rather than build a team, design methodology, and execute a major research project.

Even if you want to do some brand-new primary research in the future, it seems obvious to review the existing information before you do so – it might save you a lot of money and effort. Either way, it makes sense to do some desk research whenever you want to gain some insights into any subject.

Having as much knowledge as possible about your market, competitors, and customers can give you the power to succeed as a business.

Main types of desk research

Desk research can be divided into two main types: internal and external. The types refer to whether the information analyzed is being sourced internally (from your organisation) or externally (from somewhere else).

Internal research has a number of advantages, especially with regards to costs and resource consumption.

- Everything is done in-house – both the information and the researchers come from within your company.

- Another added benefit of this approach is that it essentially generates additional value from your work you have already done – any new knowledge gained this way is, indirectly, a result of the existing know-how developed by your business.

External research, while more expensive and resource-intensive, has the obvious benefit of covering a much broader scope of data: after all, no matter how advanced your organisation is, most of the world’s information exists outside of it. This is where a sub-typology based on the source of information used comes in. The three main types of sources are

- online data,

- government data,

- customer desk data.

The first two need no explanation, besides perhaps the fact that some useful data may not be available online (such as print books or specialized journals), so it might make sense to visit a library or a book retailer for some more niche information. The last one – customer desk data – boils down to directly communicating with existing or prospective customers.

3. Expert evaluation methods

Expert evaluation is an effective, relatively cheap technique that doesn’t require much resource.

Here are the main types of expert evaluations:

- Heuristic evaluation is a

usability engineering method that tests website usability. Its primary goal is to discover problems in user interface design and make them solvable. This process involves usability experts who inspect and assess the interface’s compliance with predetermined usability principles. It is recommended for the site to be tested by an experienced UX expert so that you have a professional perspective at the end of the evaluation.

This method will deliver you a clear picture of your product’s shortcomings. The major advantages of heuristic evaluation are that it doesn’t require extensive involvement, and that it can be used in all phases of the product development, giving you useful guidelines to improve your website and provide a much more friendly experience to its users. - Heuristic markup is a type of heuristic evaluation that can be done by some of your employees using an informal method of reviewing. In this method, someone will spend a couple of hours going through the product and doing a set of tasks, just like users would do. This will give you a full close-up of all the weaknesses of your product.

- Cognitive Walkthrough is a task-specific approach made to check product usability. It was designed to test whether new users can handle the interface and easily complete wanted tasks. This is a very cost-effective and fast approach, can be carried out by anyone, gives you the user’s perspective and it can help you prioritize the problems that need solving.

A cognitive walkthrough should let you know whether the product is intuitive enough for regular users, will they achieve what they want, are the needed actions available, etc.

- Conversion-oriented evaluation is a method that will help you estimate the percentage of users who take a desired action – for instance, the percentage of users who purchased something on a website. But conversions can also refer to other actions, such as registrations on a website, or signing up for a subscription.

There are also microconversions, which are actions like clicking on a certain link or watching a video. From the perspective of UX analytics, microconversions can provide valuable information concerning web page design. An increase of microconversion rates is a clear indicator of a design improvement. - Content audit’s primary goal is to conclude if the content is up to date and whether it’s usable. Is it accurate, is it SEO-friendly, can the tools and software deal with it? All in all, an audit shapes content management and helps estimate the feasibility of future projects and determine what the necessities are. Once you know your weak spots and strong points, it is much easier to determine future strategies and establish distinctive plans for future product improvements and developments.

- UX Review is an affordable and fast way to solve your UX issues. This is a professional analysis of user experience and usability based on scientifically-backed methods. The results are compared with the best practices in order to provide the most accurate information concerning market goals, strategy, app usability, its visual impact, intuitiveness, etc. With a UX Review you will improve your product’s competitiveness and value, and create something that will expand its marketing reach and fully meet your business goals. The data-driven report will provide you with a full assessment of your app’s potential, needs, and a roadmap for creating a product that will meet all your goals.

4. Competitor analysis

Competitor analysis aims at verifying competitors’ strengths and weaknesses in relation to your/your client’s business. It helps to identify opportunities and threats.

The research can contain an analysis of all relevant parts of business, such as market position, marketing campaigns, types and design of products and services, promotions, localisations, etc. Every relevant piece of information helps to really get to know your competitors.

Competitor analysis can help with:

- Understanding the market, and forecasting opportunities and changes,

- Finding a niche yet untapped by your competitors and understanding the business potential that you can make use of,

- Understanding how your competitors connect and communicate with clients,

- Keeping up to date with your competitors’ offerings and observing how they perform,

- Better client targeting,

- Finding new clients.

Types of competitors:

- Direct competitors – those who run business on the same market, solve the same problems, offer the same functionality, share the client base.

- Indirect competitors – those who overlap at some points with your business.

When to use competitor analysis

A competitor analysis should be performed before a project starts. This way, you can gather all the relevant information and build the strategy and design for the product better.

It should also be an ongoing process. To stay up-to-date with information on what is happening on the market, to observe changes – what the market share is, who is in the leading position, etc.

How to perform competitor analysis

Understand the market. Before we start exploring competition, we need to understand our client’s business and product thoroughly. All the information that the client can provide is very important. You should ask the following questions:

- Is there a niche? What is it?

- How do you drive traffic/reach market groups?

- How do you track the results of your marketing efforts?

- Where are you trying to market groups?

- What are your promotion channels and strategy?

- How do you advertise or make information available?

- What are your must-haves and must-avoids?

- What country (or region) is the product to be launched in?

- What is the language the product is to be designed in?

- Are there any special requirements regarding the market that should be considered (e. g. cultural or religious limitations)?

- Is the product limited by the local law anyhow (for example, anti-spam regulations or copyright)?

- Is the product to be regionally diversified (e. g. different language versions)?

It's good to know your client's strategy at this stage and have access to all materials that were created beforehand.

Find competitors. Ask your client if they have already run some market research and can provide information about competitors. If they haven't, do the research on your own. You could get information like:

- Who are the competitors? Names, short information about them, their locations, websites etc.

- What are the competitors’ missions (if they exist)?

- How are they different?

- How are they similar?

- How is your brand unique and how does it differ from the competition? Why should people choose your brand and use your app as customers?

- What are the messaging, product/service offerings, etc., that set the competitors apart?

- What are the products the competitors offer (with pricing)?

- What are the competitor’s strengths? What are the competitors good at?

- What are the competitors’ weaknesses? Where do they fall short?

- Is there anything that your competitors cannot do but you could that would make the idea successful?

- Any similar product offerings, alternative solutions to the same problem? What are your users doing today instead of using your product?

- Are there any ideas already on the market that you would like to implement in an exact way?

- Are there other apps/products that you like or can be used as the inspiration?

Define the assessment criteria. Once you've analyzed all competitors, you should select the key ones for deep analysis. Before running the analysis, set the key factors for analysis with client. What do you want to analyze? You ought to focus on the crucial criteria that can help the business.

For digital products, you can evaluate:

- Customer reviews

- Shopping, booking, ordering processes for e-commerce-based products

- Information Architecture

- User flows

- User engagement

And many more. You should identify the specific criteria for your analysis depending on your needs and what questions you want to have answered.

Also, it is very important to examine each competitor with the same criteria to get a reliable analysis.

Create a matrix. To analyze the outcome, create a competitor analysis matrix. There are several types of matrix templates you can use. It can be constructed into 2 columns:

- critical success factor – what you will be comparing

-

company a,b,c,d.

In columns, you should add adequate ratings. You can evaluate the data suitable for your needs such as:

- Yes/No answers

- Ratings/Scores

- Comment

For a more detailed matrix, you can expand your CSF to create a more detailed measuring template consisting of:

- Factor – what you will be comparing

- Competitors:

- Weight – the weight of the factor for business purposes

- Rating – assessment of the competitor

- Score – score = weight × rating

It can be also used for more complex research.

V1

|

Competitor |

Feature 1 |

Feature 3 |

Feature 3 |

Notes |

|

Competitor 1 |

||||

|

Competitor 2 |

||||

|

Competitor 3 |

||||

|

Competitor 4 |

||||

|

Competitor 5 |

V2

|

Critical success factor |

Competitor 1 |

Competitor 2 |

Competitor 3 |

Notes |

|

Factor 1 |

||||

|

Factor 2 |

||||

|

Factor 3 |

||||

|

Factor 4 |

||||

|

Factor 5 |

V3

Competitor 1 |

Competitor 2 |

||||

| Weight | Rating | Score (weight*rating) | Weight | Rating | |

|

Factor 1 |

|||||

| Factor 2 | |||||

| Factor 3 | |||||

| Factor 4 | |||||

| Factor 5 | |||||

5. In-depth interviews (IDIs)

In-depth interview is one of the qualitative research methods. It is an individual interview with potential users of the product. It is structured to cover the predetermined areas of interest and get to know the users. IDIs help to

- Determine who the users are and what their needs and motivations are,

- See if they are facing problems and obstacles,

- Verify how users behave and what their actions and experiences are,

- Shape products to suit the target users.

Types of user research:

We can divide IDIs into those run in person or remotely – you can see the participants personally or you can contact them via an online communication service. It is good to see respondents in person because you can observe their behavior in real life. But sometimes, it is not possible due to large distances, difficult and distributed target groups, time and budget restrictions, or any other constraints. It is better to meet with users via a messenger rather than not do the research at all.

You can also categorize IDIs on the basis of where they are run:

- In a lab – you meet with participants in a specially prepared and adjusted laboratory. You can have access to professional recording equipment and a one-way mirror, which enable the client or the rest of the team to listen to the interview. But some users might be overwhelmed in a lab. It is important to adjust the environment to the user group.

- In the field – you meet with respondents in coffee house, their office, their home, and so on. It is a more casual environment so users can be more open and feel more natural.

And yet another type of a division is along the lines of how IDIs are run:

- Structured – researcher uses a previously prepared list of questions. It is run in a predefined order. The researcher does not go beyond the testing scenario. The interview does not explore areas in depth, and the interaction between the respondent and researcher is rather formal.

- Unstructured – the main areas of the interview are predefined but the researcher has the freedom to dive deeper into problems, change questions and their order, or adjust the scenario. The interaction is more natural.

Recruiting users

For IDIs, it is important to set the potential target group for recruitment, e.g. people who are between 35-55 years of age, live in big cities in the UK, use smartphones, and work in the healthcare industry.

The number of participants depends on the research problem and the number of potential user groups. The size of a respondent group can range from 5 respondents to 20, or even more.

For recruitment, you can use:

- Professional recruitment agencies,

- The customer base,

- Social media, e.g. Facebook groups,

- Friends of people who participated in research (the snowball method).

To keep the efficiency, the interview should take no longer than 1 hour. It is important to make respondents feel comfortable, not make them tired.

How to prepare for in-depth Interviews

- Testing scenario – it is a document with predefined questions and areas of interest. While building the scenario, it is crucial to analyze the research problem, which will make it easier to prepare questions. Try to use exploratory, open-ended questions. Users will answer more in depth, which will help understand their needs better. Questions with predefined choices should be used for quick verification of information, telling you which way you should follow with the scenario.

- Voice & video recorder – be prepared, sometimes it is good to have more than one tool ready, in case it breaks

- Additional consent forms if needed, for example when recording the session,

- Some small reward for the participants, e.g. an Amazon

Analyzing in-depth interviews

After running the interviews, you should:

- Make transcription of the recordings, make notes

- Categorize the outcome of research – you can use one of the following methods:

- content-coding – prepare a category-specific key for content and group the outcome properly (you can do it manually or with software),

- cluster method – grouping the outcome via affinity diagrams.

What next? We gathered all the information, so what do we now? How can we use the knowledge about users?

The outcome of the research can be used to prepare, for instance, personas, empathy maps, or user stories. During the design process, they help maintain a user-centric approach, to design solutions for real users and to solve real problems.

6. Testing

Product testing is an evaluation of the product’s performance. It is a crucial part of product development, carried out to verify how a product fares in realistic conditions. Designers can do the best work they can but they are not infallible. That is why it is important to validate a product in real life, with real users. Errors can be made, and design is about iteration and continuous improvement so as to make a product meet the target users’ needs fully.

You can evaluate a product at any point in the process. You can test ideas, initial sketches, low and high fidelity wireframes, graphics, ready and implemented products, etc.

What are the benefits?

- Better understanding of users and their preferences,

- Verifying good areas and values of the product and also points and areas for improvement to make the product easier and more intuitive,

- Evaluating how the product suits users’ needs – if it solves their problems, if it is understandable and brings value,

- Choosing the best version of a solution (in case there are 2 or more conflicting solutions),

- testing the concept to see the reaction of the target audience.

Popular methods of product testing

- User testing is a method used to verify how users interact with a future/current product. Users are asked to perform some tasks on the interface so that researchers can evaluate if the product is intuitive for users, if crucial actions are easy to perform, and if the used terms are understandable.

- Guerilla testing checks the usability of a product but does not require scheduling interviews. It is based on approaching people in the public sphere and asking them to test the product.

- A/B testing verifies which version of the feature/website, etc. works better for users. Two designs are tested with respondents in equal prop. The version that performs better is considered as the preferred one.

- Qualitative and quantitative interviews are run with respondents in order to verify users’ needs and problems.

- Qualitative interviews, or IDIs (in-depth interviews, described in the previous section) are an open conversation with a respondent. This is a kind of exploratory research, describing a qualitative problem, e.g. what, how, why.

- Quantitative interviews are also run by researcher with a respondent but they are more survey-style, based on questions whose results will let you analyse the problem quantitatively, e.g. how many, how often.

- Surveys are based on a set of questions, handed out to respondents, which relate to specific topics/issues. It is a quick method that helps to gather large amounts of data.

- Card sorting is a simple, yet effective, way of understanding how users think and feel the product should be grouped and organized. It is done by simply writing on a piece of paper and sticking it onto a board in the order one sees fit.

- Tree testing is known as reverse card sorting. It helps to verify how users can find information, and how they can navigate in a complex hierarchically organized structure (tree structure).

- Eye-tracking uses the measurement of the movements of respondents’ eyes while testing the interfaces/products/advertisement etc. This kind of research helps to identify where the user looks most frequently and what areas they omit.

7. User testing

User testing facilitates checking how end users interact with a given product or service. What’s really great about user testing is that the product itself doesn’t have to be live (but of course can be!). Instead of spending valuable resources on development – without being sure if the product will be received well by end users – one could build a prototype and conduct user testing on it.

Such approach allows UX validation of the key scenarios and use cases among product’s most important user groups before the actual product hits the market, and potentially save lots of money and effort.

Even if testers end up being confused and dissatisfied, you’ll have all the needed information on what should still be worked on and improved. Also, testing a product that’s already live has similar benefits and can set you on the right path to refining it. However, it requires careful planning and correct testing methods to make sure you get constructive and unbiased feedback.

Basically, user testing can be divided into 4 main phases:

- Preparations,

- Recruiting Users,

- Conducting Sessions,

- Data Analysis.

Preparations before user testing

No matter how eager you are to jump into showing the prototype to your users, it’s best to cover the basics and create an environment that will support the whole user testing process:

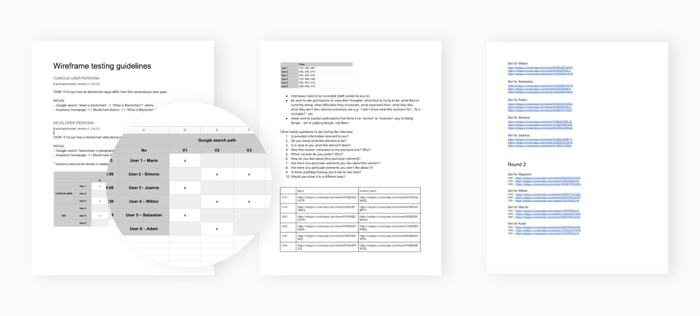

- Create a document or a spreadsheet where you'll keep all your prototype links, testing scenarios, session schedule, and so on.

- If you want to validate and get user feedback for different options, prepare different sets of prototypes you'd like to test.

- Give each prototype a unique name according to a pattern of your choice so that you don't get lost when trying to find out which version should be shown to which user.

- Prepare user testing tasks and scenarios, then choose the right user personas for them. Think of what you exactly want to get tested. It’s good to start your research by testing the most important functionalities of the product.

- Choose the software for your testing sessions that suits your needs. Some recommendations that let you record both users themselves and their interactions with prototype:

- Remote testing: GoToMeeting, Skype, Lookback, UserTesting, Google Hangouts (Enterprise plan)

- Onsite testing: Quicktime, Lookback, any camera on a tripod (it can be your phone)

Example of a preparations document

Recruiting users

After you’ve got all the preparations covered, it is time to decide whether you’d like to proceed with:

- Moderated user testing, where the researcher can communicate and interact with the testers.

- Unmoderated user testing, where tests rely on users trying to complete the tasks they are given without any external influence.

Both approaches have their pros and cons.

Moderated user testing provides more control and can lead to the discovery of new insights from the users, but is more costly and prone to bias. On the other hand, the unmoderated approach allows for cheaper and easier tester recruitment, but it doesn’t give you any chance to ask valuable follow up questions.

Setting up unmoderated sessions is relatively easy, as a third-party service will do all the hard work for you. In such case, you can give UserTesting, TryMyUI or PingPong a go. However, when opting for moderated user testing, you might encounter some unexpected issues such as the difficulty with finding the right testers or trying to work around scheduling meetings with severe time-zone differences. Here are some tips on how to make the setup process a breeze:

- The general rule of thumb says that you only need 5 users to test your prototype. However, it is important that all participants match the product’s target audience, so, depending on the project, finding the right testers might turn out to be very easy or quite hard. After all, in the case of moderated tests, the ball is in your court. While looking for the right users, don’t be afraid to make use of social media, classified ad websites, and forums. Do some research and try to pinpoint the best places to reach a particular target group.

- For hard-to-find candidates, it's best to prepare some reward for their participation – usually gift cards for Amazon or Spotify (or any other equivalent service) will work quite well. You can also consider sending some of your company's swag as a token of appreciation.

- When posting the actual message that you’re looking for user testing participants, remember to include the most important information:

- Who are you looking for?

- What is the subject of user testing?

- What will the sessions look like?

- Will the session be recorded?

- How long will each session take?

- In which time-zone are you located and what time-frame are you aiming for?

- Is there any participant reward?

- Do the participants need to prepare for user testing in any special way?

- If you're planning on testing more than one prototype with each user, prepare all the prototype links for them beforehand. It's good to show prototypes in a different order to each participant to avoid making them biased towards the first shown version.

- Get in touch with candidates and schedule each user testing session using an online calendar, so you can smoothly manage all the meetings and easily refer to each session. It’s also great for keeping track of different time zones, as most of calendars (Google, iCal) automatically adjust the meeting time zones to the participants’ locale. Remember to include the following in the invitation:

- the description of the event,

- the information on where

- how the session will take place,

- how long it will take

- and that it’s going to be recorded.

Conducting Sessions

Now it’s time for the real deal – the tests themselves. At this point, you should have everything ready, but some pitfalls might still occur that might affect the test’s objectiveness if you went with the moderated user testing route and you’re going to interact with the participants directly. Asking suggestive questions or allowing for personal bias are among the most popular mistakes. The simple tips below will allow you to avoid such issues.

- Start with thanking the participants for their willingness to take part in the user testing. Be transparent about the intent of recording sessions and be open about with whom the data will be shared. In order to share the recordings with stakeholders, you’ll need to have your testers’ consent.

- Explain the user testing scenario and your expectations regarding the course of the session. Tell participants you'd like them to share as many thoughts as they have and that this is still a work in progress, so their input is valuable. Don’t forget to turn on the recording!

- Try to talk as little as possible during the testing. Some users are less willing to share their thoughts than others, so you might want to interact with those to get some more information. There are a few interview probing techniques that might help you get the information you need, we will discuss them below.

- The silent probe: try remaining silent for some time, people generally avoid silence and there's a chance the user will start elaborating on what they’ve said previously.

- The echo probe: you can try saying "You've said that [something that user said]. Could you elaborate on that?”

- The long question probe: “what are all the steps you have to go through to [do the action you’re interested in]?”

- When you want to get information about some particular areas of the prototype that user didn't comment on so far, ask open and non-leading questions. Below, you’ll find some good and bad examples of interviewing questions.

Leading vs objective questions:

What do you like about using Twitter?

This will only get you the answers what’s good about Twitter, not the whole picture.

What's your experience with Twitter?

The respondent will actually share their thoughts on any positive and negative experiences they might have regarding Twitter.

Closed vs open questions:

Do you use Slack?

This can be answered by just Yes/No and thus can give you a very short answer.

How do you find using Slack?

Here we ask the interlocutor to share all their experiences regarding Slack tool.

Data Analysis after user testing

Now you need to analyze the findings and prepare the final report with all the conclusions and recommendations. Again, even such a fairly easy process as documenting a user’s behavior and their experience with the prototype can come up with unexpected challenges. Here are several good practices that will help you avoid them.

- It’s best to analyze the recordings with a partner or even more than one person to avoid including your personal bias while processing the information and writing down conclusions and theories behind user behavior.

- Listen carefully to what people say and in what context they say it. Write down the most important statements and quotes for each prototype/screen.

- Keep your documentation well organized, with all the prototype links and recordings available for each prototype. This comes in handy when sharing the documentation with other designers or stakeholders.

- It’s qualitative data, but try to keep track of the numbers. If some issue is reported by all the participants it’s good to have the math on your side and keep track of it. It might also be useful to include numbers in the report, as they are going to support your findings and insights.

- Prepare a summary report with all the insights from the user testing. It’s a good practice to include the key takeaways at the beginning of your report so that stakeholders who are not interested in reading the whole thing can easily pick up on the most important conclusions.

8. Lean product experiments

Lean experimentation is at the core of the lean startup method. It is best understood as a part of the Build, Measure, Learn cycle which applies testing and experimentation to products and services, but also entire business models and organisations.

Here are 3 key benefits of lean experimentation. It:

- Enables organisations to iterate faster, develop better products and serve customers more efficiently.

- Helps impact the bottom line by increasing profits and reducing waste.

- Creates a culture where experimentation is the driving force behind the lean startup methodology and an essential step in optimising conversions.

Lean experiments always begin with a hypothesis

It might seem counterintuitive, but the idea is to conduct these experiments in a way that allows them to fail. In fact, it’s very reasonable and simple: let it fail while you’ve only invested a fistful of dollars in it, rather than when your bank account goes dry. You also need to define how to measure its success.

For example:

You can conduct an experiment that aims to identify the percentage of participants required to engage positively. If the test fails, you'll know the reason behind that failure and correct your direction on the basis of the new insights.

As you run experiments and validate your assumptions, you will start learning about the real needs of your business.

How to run lean experiments

There are many ways in which one can test a hypothesis. Here are 3 essential pointers to help you create smooth and straightforward tests:

- Make your tests small and systematic – don't put your organization in the position where you're testing your entire venture or too many variables at the same time. That way you risk becoming overwhelmed and eventually failing to implement what you've learned. It's best to make your tests small and regular. Choose a single problem, area, or variable. Create a hypothesis and test it systematically.

- Find the right tools for testing – many tools which come in handy for testing are free or cheap, opening the doors to more elaborate solutions when you get enough valuable insights from your initial tests and can justify that investment.

- Analyze, track, and measure – before testing, you need to have a technical framework in place that will help you track and measure your success. That's how you'll be able to see your progress and analyze your results. It's also easier to share and implement your findings when you have all the information in one place. Equipping your team with the right tools is one step – investing in the right configuration early on is another one.

The Lean Experiment Canvas (LEC)

The cornerstone of the lean startup methodology is the Lean Experiment Canvas (LEC) that allows you to run smarter experiments with better results that are in line with the Build, Measure, Learn cycle.

It's risky to leave learning and insights to chance. The LEC model helps solidify assumptions, risks, and metrics before you start testing. The objective of LEC is supporting businesses that want to uncover truths about themselves and their customers.

The model is made up of 12 blocks that collectively take organisations through the scientific method of lean experimentation. All blocks are equally important – but as you fill out information, you're bound to discover a unique pathway for your organisation to follow.

Types of lean experiments

- Wizard of Oz tests. This type of testing is conducted by a person who manually performs specific functionalities of a product or service. For example, if you'd like to find out whether investing in a chatbot is a good idea for your business, you can open a chat service on your website that is managed by a human who poses as a bot. You will learn what kind of questions people ask and how they interact with the chatbot.

- Qualitative interviews. Interviewing people and asking them open-ended questions will help you learn about their explicit and implicit needs. As you pose these questions and get answers, you will start to notice patterns that indicate what users find challenging and why. This type of insight will direct you toward developing a solution that makes sense for users.

- Prototype testing. It is based on creating a prototype of your solution and putting it in the hands of users to see how they respond to it. You will immediately get an influx of thoughts or concerns people might have about it even before the product is publicly available.

- Smoke testing. It is based on testing a service that doesn't exist yet to understand whether there is interest and engagement for it. A crowdfunding campaign is an excellent smoke test. Or you can just put a sign-up form on your landing page and see how many people subscribe to learn more about your offer.

For more information, check out this comprehensive overview of lean testing methods.

9. Smooth onboarding

Apart from being beautiful and impressive, websites and landing pages should show the value proposition. While your team may be really into advanced features or the captivating product story, "what does it do for me?" is the most crucial question for the customers.

Onboarding is a perfect way to convey your message and show people how your app can help them fulfill their goals. If your customers don’t understand how they should use your app and what are the core features, your one-time shot will be over and – which can be even more hurtful – people can start talking about the bad impression you’ve made.

People tend to evaluate interactions based on the first 50 milliseconds. If you want them to continue exploring and engaging, they should have a clear idea what is the theme, purpose, and offering within the initial view of your product. The design and a prominent value proposition in user onboarding should provide that.

Headline

Make sure that the main headline explains the value and is visible at first glance. Your visitors have no time to search for it. If you are lucky enough, they clicked your ad or just found you in Google – don't waste this opportunity. That's why it's so important for your visitors to learn what you do from the first impression.

Value proposition

There are many other ways to present your value proposition. You may add a video explainer or show the benefits of using your product with drawings or icons. If you'd like to play it safe, take a look at the market leaders in your sector and follow their example.

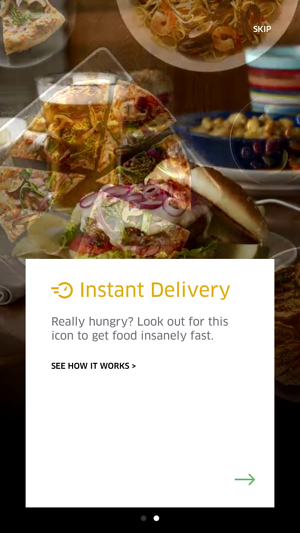

Very quick feature overview of Uber Eats with the possibility to dig deeper if the user wants to know more about app's features

Sign-up

The other thing is sign-up, a process that is often required just to start interacting with the app. Do not ask the user to register until it’s really necessary. Nobody wants to buy a pig in a poke, so even if you’re kindly asking for users’ for credentials right after downloading your app, expect far less sign ups. Instead, allow people to look around, know your product, and appreciate its real value. Once they’re accustomed to the app, show them the value of signing up and staying longer.

10. Mobile optimization

In 2016, worldwide mobile and tablet internet usage surpassed desktop for the first time ever, signifying a huge shift in the importance of optimizing design for mobile devices. Failure to provide a great mobile experience can be costly, both in terms of lost business and damaged reputation:

-

According to a survey by Google, 52% of users said that a bad mobile experience made them less likely to engage with a company.

-

Mobile users are 5 times more likely to abandon a task on a site that’s not optimized for mobile.

-

A study by Bizrate Insights revealed that the number one annoyance, cited by a third of online shoppers, was having to constantly enlarge their screen so they could click the right thing.

The mobile experience is totally different from the desktop one. You should always keep in mind that your users can be on the move while using your app, can get distracted by external factors (like a car horn or a talkative colleague) or internal ones (such as a notification from another app). The key to a great user experience is to be prepared for such situations and anticipate them at the right time.

Permissions

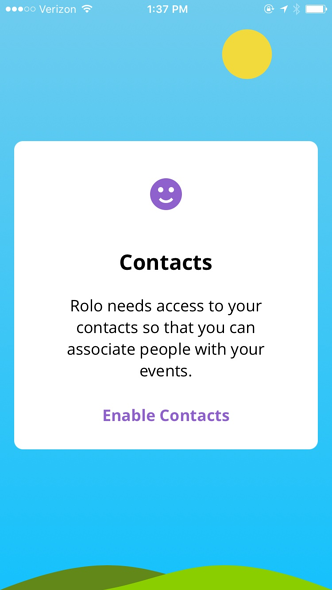

Ask for only those permissions which are really required to use your app. If you created an app to discover plants, it’s a good idea to ask for access to the camera. If it’s an app with podcasts – you probably don’t need the list of contacts imported from the user’s address book.

Rolo Calendar politely asks for permission and provides a proper explanation why it’s needed to use the app.

Notifications

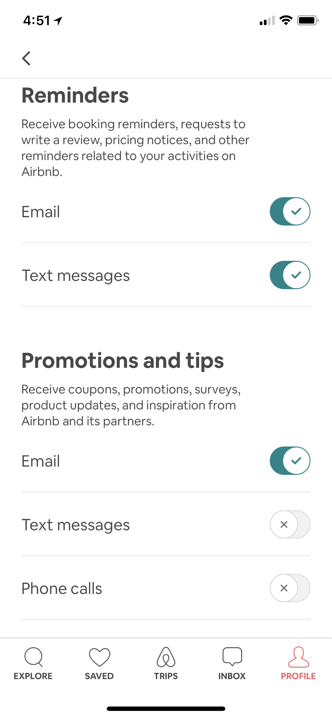

Bombarding users with messages every time something happens will eventually lead to frustration and uninstalling your app for good. By default, be very gentle with in-app notifications and let the user decide what type of alerts s/he wants to receive. This way, your users will have a sense of control over your app and personalize the notifications just as they like it.

Airbnb users can decide what kind of notifications they will receive in terms of content as well as channel.

Top bar navigation

Even if you are building your product for a very conservative or professional audience that uses mostly desktop devices, your web application has to work on mobile properly. And this means replacing top bar navigation with a slide in the menu.

Ready-to-use components and patterns

Apple and Google created very extensive guidelines for mobile patterns – one of the goals of those guidelines was to stop creating new ways of achieving the same thing. Since it takes some time to learn a new pattern, and people are accustomed to using similar elements employed across various apps, it’s not a good approach to reinvent the wheel when it comes to very standard elements.

Remember about mobile constraints

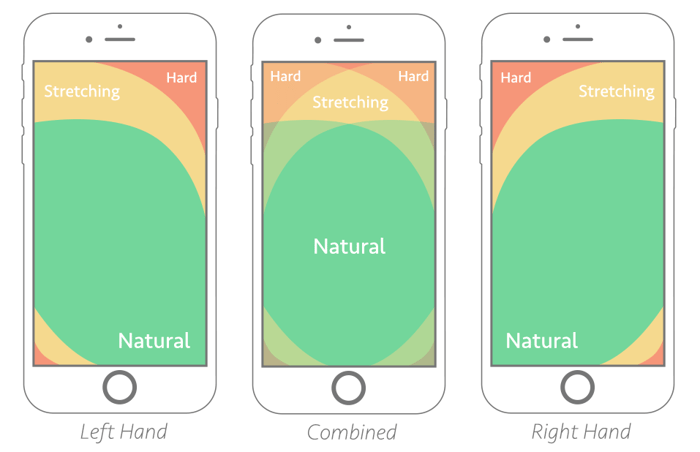

Also, remember about the physical constraints of mobile devices.

You probably know this from your own experience – it’s hard to reach elements in the upper area of the screen. Therefore avoid adding frequently used features outside of the zone easily reachable by thumbs.

The other issue is different sizes of people’s fingers – tapping on the wrong item and spelling mistakes happens way more often on mobile than on laptops. Be sure you’re prepared to fix the problem quickly and counteract possible mistakes.

11. Navigation

It's a common mistake that is quite easy to avoid, yet hard-to-find navigation or menu buttons have always been typical UX flaws. Although the term mystery meat navigation was coined 20 years ago for describing navigation links that are not visible unless you hover the cursor over it, the mistake can still be found even in recently redesigned websites, of which the worst examples were usually built with Adobe Flash.

The rule here is pretty straightforward – the simpler, the better. When creating navigation, you should consider a few factors – which of your menu options are the most important, can you limit the number of elements up to five, will the menu (in whichever form), be frequently used by your customers? There are numerous patterns to choose from, but keep in mind that your users shouldn’t feel lost and always know from which point they can start all over.

Navigation is a very broad topic and we described it more thoroughly in a separate article.

12. Multichanneling

When shopping, your customers are not considering the experience in terms of channels (such as mobile, desktop or tablet) they are just just looking around, reviewing the product, and eventually want to buy it. What you need to provide is a convenient way to do so, no matter what channel your customer is currently using.

Therefore, when a person is checking out your products on mobile and then changes the device, you shouldn’t force her or him to perform the same search all over again. The same goes for filling checkout details – when the user stopped at one of the steps while using a smartphone, do not require them to enter the same details through another channel. People will notice the convenience.

13. Animations

There are numerous benefits of adding meaningful animation into your app. It prevents confusion, lightens the cognitive load, and helps in understanding the user’s or system’s actions without explaining it through the text. The main sections of your app you should consider in terms of animations are:

- Transitions between states

- Selected clickable components

- Loaders, showing progress and system status

Example of visual cues when a user is performing actions in Hive app

Research shows that the optimal speed of interface animations is somewhere between 200 and 500 ms, while all movements below 100 ms are just unnoticeable for the human eye. The animation speed should adjust to what the users are accustomed to, so it's a good idea to track not necessarily your competition, but the most popular apps among your target audience – be it Facebook, Messenger, Instagram, Gmail, Slack or Snapchat.

Functional animations are a great way of communicating actions to the user. Motion is understood intuitively and analyzed by different parts of the brain than static text, shapes, and color, which dominate interfaces. It also gives a real-life feeling to the digital world, as traditional buttons and switches move and click when we interact with them.

However, when using animations and moving elements, you need to be careful not to make any of these common mistakes:

- slow and lengthy,

- presenting all the content on fade-in,

- overriding the default scroll.

A fade in animation is considered a good way of attracting attention to a headline or an image. It also shows continuity between the state before and after the action. However, you should be very careful not to overuse it. The content should be presented in a static form to be readable.

Also remember that animations drive attention, so beware of animating every element in your app, since your customers won’t be able to focus on the important parts.

Animation can also be fun! Even a small twist can be considered a nice gesture towards your users, and they will definitely notice that you pay attention to the details.

Who says that logging in must be dull and boring?

Google is a great source of knowledge that should help you understand the principles of animation and motion in general.

14. Spacing

The attractiveness and clarity of the message relies on the simplicity of the website design and the organization of the content, therefore a minimalistic approach is highly recommended by every UX design agency. Staying away from unnecessary visual distractions should translate into a more effective call to action.

Aesthetic-usability effect

Every UX designer should be aware of the aesthetic-usability effect which is a common found phenomenon that shows that users tend to link the superficial attractiveness of websites with their practical functions even if it is not the case. This tendency could work to the UX designs’ advantage, although it could also be a double-edged sword and it should not trump the vital role of practicality.

The Gestalt law of proximity

There is a key rule you should keep in mind when it comes to spacing. It is called the grouping principle or the Gestalt law of proximity and was formed by the Berlin School of experimental psychology. It's quite straightforward – whenever you place objects relatively close to each other, our minds will consider them a group.

This is a dominant tendency. The Gestalt law works even with objects that have different shapes, sizes or colors – if they are close to each other, the brain will consider them a group.

Some beginner designers don't know the rule and will try to override the spacing with shapes or colors. This will create chaos in most users' brains. Remember to use spacing wisely. You can read more about the proximity principle and other Gestalt laws in this post.

15. Performance

While good design is important, it has to be backed up by superior performance to be effective. Speed must be at least on par with users’ increasingly high expectations, not only in terms of load times, but also in terms of the time it takes to navigate the page or app and reach the end goal.

- In their State of Content report, Adobe revealed that 39% of people will stop engaging with a website if images won’t load or take too long.

- 40% of people advised that they left a website taking more than 3 seconds to load.

- The App Attention Span report by AppDynamics uncovered that more than 80% of users deleted or uninstalled at least one mobile app due to poor performance.

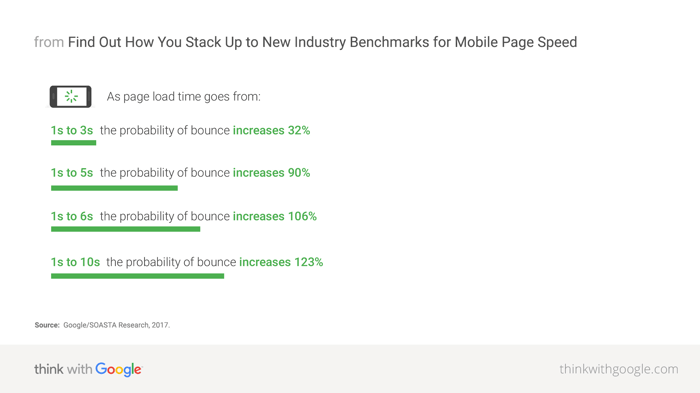

Maybe it will sound very obvious, but we can still see the consequences of not paying attention to loading speed. Performance of your app deeply affects your customer’s satisfaction – as Google/SOASTA Research states, if a page takes more than 5 seconds to load, the probability of bounce increases 90%!

Although the research is about mobile landing pages, we can easily imagine that the same conclusion can apply to mobile apps – the slower your app is, the more frustrated people are. Other factors that affects apps’ performance are the number of crashes, the smoothness of animations, and even battery usage. If in a certain situation you can’t improve the speed of your app ad hoc, remember to show relevant information to you users such as a loader, or a visual or textual explanation in the line of the principle – Fake it till you make it.

16. Content

Content is the backbone of a website or app. It has proven to be an efficient way to:

- Attract potential customers,

- Onboard and retain users,

- Improve sales,

- Build brand awareness,

- Attract, nurture and convert leads,

- Improve customer satisfaction with customer service,

- Upsell,

- And improve revenue.

This means that design should support the messaging by not detracting from the content. The right information should be presented to the user at the right time, in an uncomplicated way.

- A report by Huff Industrial Marketing, KoMarketing, and BuyerZone revealed that 46% of visitors will leave a website due to lack of a clear message, meaning that they can’t tell what a company does.

- The same report also found that 86% of visitors want to see information about a company’s products or services on their homepage.

- Magnetic North found that one in three people will abandon a purchase because they can’t find the information they are looking for.

However, even if you have invested time and money in creating quality content, it may prove to be counterproductive if you do not control how your audience interacts with it.

Content distribution

Many companies focus on content creation and neglect content distribution. Both activities are crucial elements of content marketing strategy. Your website is the most critical channel for delivering your message. It is the center of your content distribution process.

Whenever your audience finds your blog posts by typing queries into a search engine, engaging in a social media conversation, or receiving a well-timed e-mail, they end up on your website. Consequently, you need to make the experience of reading the website useful and pleasant.

Content readability

Always keep in mind who your target audience is. Adjust the level of complexity to the least expert members of the group. Use short sentences and precise language. Explain the key terms you are using and make sure everyone understands them correctly.

For better readability:

- Big fonts with high contrast against the background,

- Avoiding CAPS in headlines,

- Avoiding italicized text,

- Avoiding long, box text blocks,.

- Adjusting line spacing to the font size.

Content structure

Present your content in a well-organized manner. Embed short stories in larger stories that you can passively reveal to your audience step-by-step – when they are browsing or searching your website, and actively – when they receive proactive messages via e-mail, live chat messages, or social media posts.

Keep it simple

Avoid the biggest mistake: don’t flood them with content. Information overload is the “disease” of our times. The human brain is not used to processing all the data we have access to. At the same time, companies are bombarding us with messaging. No wonder people get very annoyed when they are served too much information. They want their problem to be solved, and your content should help them do it by giving a straight answer to their question.

17. UX copywriting

UX copywriting is about the communication between product/interface and users. UX writing relates to all possible messages and communications displayed to users. Every description, email, notification, label, error message, link, button text, etc. should be thought through and designed with the best UX writing practices in mind.

Every message that users encounter has an impact on their overall experience. That is why it is highly important that users understand what we want to tell them. UX writers focus on delivering short yet informative and understandable content, to limit users’ confusion. Appropriate copy should guide users and help navigate through the product.

UX copywriting best practices

- Fit the writing style to the product and brand identity.

- Use natural language – talk like your users would talk.

- Avoid jargon (that your users are not familiar with) and adapt your language to your product's target group.

- Be clear, concise and precise, avoid flood of text and cognitive overload.

- Be consistent – think about your style and stick to it.

- Start with words as soon as possible – avoid lorem ipsum in your wireframes and designs.

- Test your copy and verify with the target audience whether the content is understandable.

- If you’re not sure whether your text would work, create different versions and start A/B testing.

- Supplement text with visuals.

18. Information architecture

Designers and developers have excelled in creating navigation systems that suit the users’ needs. As a result, a new branch of science has emerged: information architecture. In the case of marketing, information architecture is about organising and structuring the content of websites, web and mobile applications, and social media software.

Information architecture (IA) is a complex science, and it's much more challenging to fix an already broken IA in a working app than to build a healthy one from the start.

Your IA should put user satisfaction at the top – this is your primary business goal – provide the best value to the customers. If this works well, everything will come naturally.

Here are eight useful rules in information architecture:

- Follow best practices. Benchmark your website’s design not only against competition but the most popular content sites as well.

- Make the interaction with your content effortless. Speak the language of the audience and place the answers they are looking for in a place they can locate it.

- Adjust the content to the audience. It should be simple, but avoid being condescending. If you are selling a sophisticated B2B solution, assume your website’s visitors already know something.

- Remember the context. Each piece of content should be coherent with your brand’s story. When writing about a product, keep in mind the advantages over competition and how the product interacts with supplementary products or services.

- Be consistent. Keep the content up to standard to provide a coherent experience throughout the journey. The topics you mention should be presented in a similar form and wording, and they should highlight the most important values.

- Don’t annoy the audience. Help them solve their problem and sell your product as an integral part of the solution.

- Avoid information overload. Draw attention by using concise and relevant headers and images.

- Engage the audience in a conversation about the product by asking questions, offering an option to leave comments, or sending personalized proactive messages to the reader.

Implementing an effective information architecture is difficult – this might be why many companies don’t give it the importance it deserves.

Your website visitors can explore the content by browsing through related articles and categories or searching for a direct answer.

There are three ways to organize your content:

- Hierarchy: from the most important to the least significant pieces of content. The most relevant categories should be included in the main menu, while the articles should have different size, color, contrast or alignment.

- Sequence: presenting the story of your solution, product or brand step by step. This approach is natural when distributing content with timely messages, but you can also use it passively on your website, where articles can be divided into episodes one following another.

- Matrix: where users may choose the path they want to take on their journey.

You can arrange the content by tagging it with a clear label according to the topic you are covering (such as Solutions, Products, Case Studies, Referrals, Team), the audience you are targeting (segments or personas) or chronology (by date).

19. ZMOT

As the Zero Moment of Truth (ZMOT) trend, a term introduced by Google in 2011, is gaining traction, the importance of content marketing is growing. The observation Google made is simple: when customers have a need, they search for information and make immediate shopping decisions based on it. According to the research conducted by Google, 88% of US customers do research online before buying a product. Google claims that the behavior has become global over the past few years.

Mobile devices made the trend even more visible, as the barrier “as soon as I get back to my PC, I will check it out” has disappeared. Making decisions with the use of smartphones puts even more pressure on clear messaging and information architecture. One-third of all consumer packaged goods Google searches are carried out on smartphones.

Be present in all popular searches related to your product or service, keep an eye on your competition, and have something interesting to say that will engage your audience. Don’t just focus on describing the advantages of your product. Explain the benefits of using your product’s category, and don’t be afraid of comparing your product with the competition.

Once people find you when looking for a answer to their question, you need to make them feel comfortable. The answer should be easy to understand and point directly to your solution through an effective CTA after explaining the advantages. Boost your message with case studies and testimonials.

20. Accessibility

Creator of WWW, Tim Burnes-Lee said “The power of the Web is in its universality. Access by everyone regardless of disability is an essential aspect”. So accessibility is a part of WWW since the beginning. While creating the website we should remember that it can be explored by people who are having problems with their sights, hearing, motility.

Some of the accessibility rules are:

- It should be possible to enlarge website (at least 200%) with browser tools without horizontal scrolling. The enlarged page can not "lose" the content.

- All graphic elements should have a concise alternative text (alt), which describes what is on the graphic or, if the graphic is a reference – where this reference leads. The color contrast of all elements conveying content (texts, links, banners) or functional elements must have a text-to-background brightness ratio of at least 4.5 to 1, and preferably not less than 7 to 1.

- All sound, video and multimedia files (broadcasts, interviews, lectures) should be supplemented with text transcription. Players of these files placed on the website should be able to be operated using the keyboard and be available to the blind.

- All subpages should be based on headers. Break the page into headers so that people using the page readers can quickly move to the section of interest.

- Navigation within the entire website should be accessible from the keyboard level.

- All page titles must be unique and inform about the content of the subpage on which the user is located.

There are multiple ways to improve user experience, but in order to start doing it, you need to select the areas you want to focus on. Some refinements require a lot of time and resources, but with proper research and budget for changes, they will bear fruit in the future. Your users will thank you later!

.jpg?width=384&height=202&name=10%20Tips%20for%20Stunning%20%20Dark%20Mode%20Design%20(1).jpg)