Mobile App Development Services

We help companies leverage technological capabilities by developing cutting-edge mobile applications with excellent UX (User Experience) across multiple platforms including Android and iOS and mobile devices.

Mobile App Development Services

Mobile app development services refer to the creation of software applications that are designed to run on mobile devices, such as smartphones and tablets. The process of developing these apps generally involves creating a user interface and design, coding the logic of the app, testing the app, and then making it available for download through an App Store or Google Play.

Mobile app solutions that deliver value to customers and elevate your brand

Expand distribution

1.8 billion people worldwide purchase goods online. Sell more with ease and immediacy.

Increase brand exposure

Apps account for over 90% of online time on smartphones. Be where your customers are.

Build engagement and loyalty

Send out relevant marketing messages and use push notifications to increase retention through reminders.

Optimize tactics and processes

Gather data and optimize business operations to increase efficiency and profitability.

Our scope of mobile app development services

At Netguru, we know that every detail of the development process is crucial, and so we’ve built the expertise to provide a full range of mobile application development services. We can be responsible for design, coding, management, or integration, but we can also develop your product from scratch into a fully functioning application. Regardless if you're a start-up or a big enterprise, we'll adjust to your needs and create a beautiful digital product that meets your expectations. Here are some of the mobile application development services that we offer:

- Product Discovery & Product Research

- UX Design, UI Design & Branding

- Native Mobile App Development (Android or iOS)

- Cross-Platform Mobile App Development

- Preparing a Go-To-Market Strategy

- QA Advisory & Consulting

- Providing Customer Insights & Behavior Analytics

- Maintenance & Support

Case Studies of Netguru's Clients

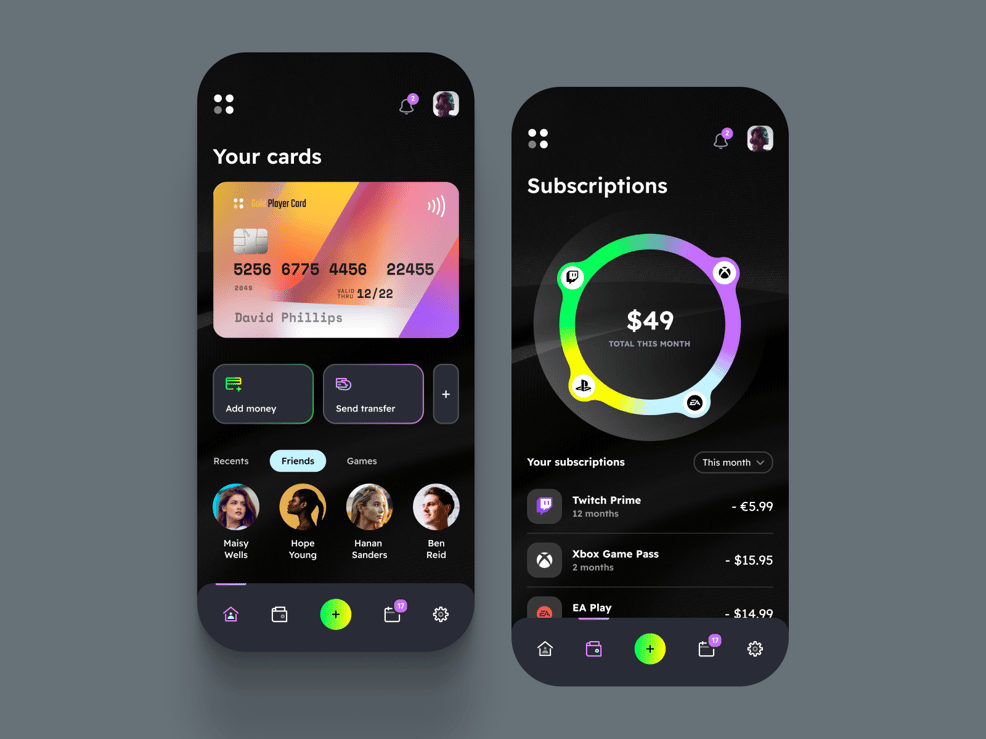

-

VisionHealth is a German startup. The client wanted to develop the Kata app to improve inhalation treatments and to establish partnerships with medical facilities. The role of Netguru was to provide m...

VisionHealth is a German startup. The client wanted to develop the Kata app to improve inhalation treatments and to establish partnerships with medical facilities. The role of Netguru was to provide m... -

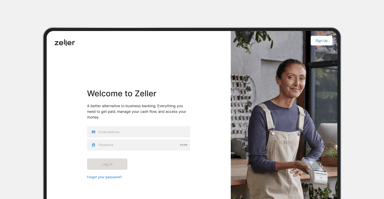

Zeller: a Fintech Mobile App that functions as a fully integrated financial operating systems for business owners

Netguru partnered with Zeller to deliver a dedicated extension to their internal product and engineering teams. Zeller’s initial 12-month launch timeline meant that Netguru’s team were called upon to ... -

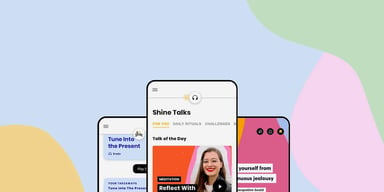

Shine: a Healthcare Mobile App that helps users build a healthy routine for the mind by enhancing confidence, productivity, and mental health

Netguru was integrated with the Shine engineering team to help maintain and develop the iOS and Android apps and support their growth. The scope of our cooperation with Shine included UI/UX design, so... -

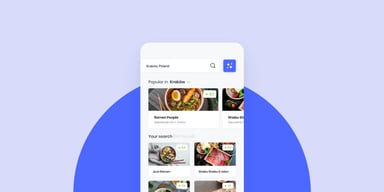

Foodective: a Food and Beverage Mobile App for customer reviews and also a one-stop-shop and data aggregator for restaurant owners

Foodetective is a global foodie community that connects users to the best dining spots in their proximity. The goal of the project was to build two intertwined products: a platform with honest and tru... -

DAMAC: a Real Estate Mobile App that delivers a wide range of features that assist real estate agents in their work

DAMAC Properties is one of the Middle East’s leading developers of luxury homes. The company developed an idea for an app in which all of the information the agents needed, is available at their finge... -

Żabka: a Retail Mobile App Rapid that leveraging innovation to combat food waste

Żabka is the leader in modern convenience stores in Central and Eastern Europe. The client aims to address the problem of food waste with a smart solution that’s aligned with both their technology str... -

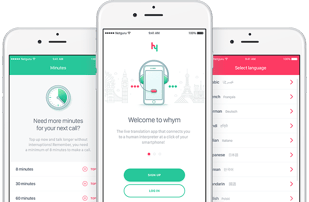

whym: an Education Mobile App for instant interpretations allowing users to communicate in different languages

Automatic translation is fast, but in an emergency, it can cause misunderstandings. -

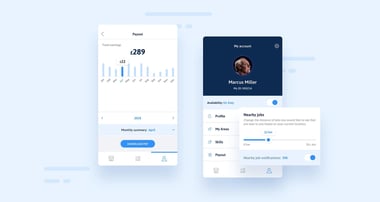

Shepper: a Job Marketplace Mobile App that sends real people to real locations in order to check on assets and collect vital information and data

Shepper provides digital reports of physical assets. Shepper sends real people to real locations in order to check on assets and collect vital information and data. Shepper needed a team that could sc... -

Keller Williams: a Real Estate Mobile App that functions as a consumer and personal assistant app with an AI-powered CRM system

Keller Williams, the world’s largest real estate franchise, wanted to stay ahead of the competition by leveraging their data to boost artificial intelligence-powered technology. As a design and softwa...

Experienced across a broad range of industries

Fintech

From payment processing to investing and beyond, mobile app services make it easier than ever to access financial services on the go. Retail & Ecommerce

By developing a mobile app, businesses can provide their customers with a convenient, user-friendly way to shop for products and services.

Healthcare

With mobile apps, healthcare providers can give their patients the tools they need to live healthier lives, like tracking their health goals or medical information.

Education

Mobile apps are a powerful tool for a variety of purposes, from keeping track of assignments and grades to providing a platform for distance learning.

Building apps on mobile platforms where your customers are

iOS

Create mobile apps for devices with the iOS operating system: iPhones, iPads, Apple TVs, and App Clips.

Android

Create mobile apps for devices with the Android operating system: Smartphones, Tablets, and TVs.

Cross-Platform

Create mobile apps that can be deployed on both iOS and Android (and even as desktop and web apps).

Wearable Devices

Create mobile apps for wearable devices: Smartwatches, Apple Watches, and Fitness Trackers.

Leverage our proven process of building intuitive, easy-to-use applications that attract and retain user attention

How we work?

-

Conducting Product Discovery and Product Research

-

Creating the UX Design, UI Design, and Branding

-

Coding the app courtesy of our veteran engineers

-

Testing the app and making sure that everything works well

-

Co-managing the app with your technology team

Why create your mobile app with Netguru?

Netguru stands as a front-runner in the software and mobile application development landscape. With a rich 14-year history, a team of over 500 experts, and more than 1000 successful projects, we bring unparalleled expertise in delivering top-notch cross-platform and native mobile apps. Our seasoned mobile developers are well-versed in cutting-edge mobile technologies and trends, positioning us to offer innovative and trustworthy solutions to our clients.

Powerful solutions to deploy in your company’s mobile app based on recent trends in digital technology:

- Internet of Things (IoT) - These are applications that collect data from connected devices and control them remotely. It also allows users to control multiple devices from a single interface, making it easier to manage a home or office powered by connected devices.

- Augmented Reality & Virtual Reality - AR can be used to give users the ability to use a virtual try-on before purchase, and VR can be used to create immersive experiences that transport users to virtual worlds.

- 5G Technology - Enables for example AR and VR apps to offer more realistic and immersive experiences.

- Artificial Intelligence & Machine Learning - AI improves efficiency by automating processes such as testing and debugging. ML can also be used to improve the performance of mobile apps by optimizing code for specific devices.

- Foldable Devices - Your business can use foldable devices to create virtual showrooms, provide interactive product demonstrations, view content in multiple formats, and store more information in a smaller form factor.

- Blockchain - Utilize blockchain to verify user identities and store data in a decentralized way. This can help prevent data breaches and ensure that only authorized users have access to sensitive information.

- Cloud Computing Integration - By using cloud-based services, businesses can reduce the time and cost of developing and deploying mobile applications and scale applications quickly and easily, without the need for extensive infrastructure.

Technologies we use for mobile development

- iOS App Development - SwiftLint, Objective-C, RxSwift, CircleCI, Swift, SwiftUI, CocoaPods.

- Android App Development - Java, Kotlin, Fastlane, Graddle, Coroutines, Dagger2, JetPack.

- Cross-platform App Development - TypeScript, React Native, Javascript, Flutter, Native Script.

- Backend Development - Node.js, Ruby on Rails (RoR), Python, Firebase

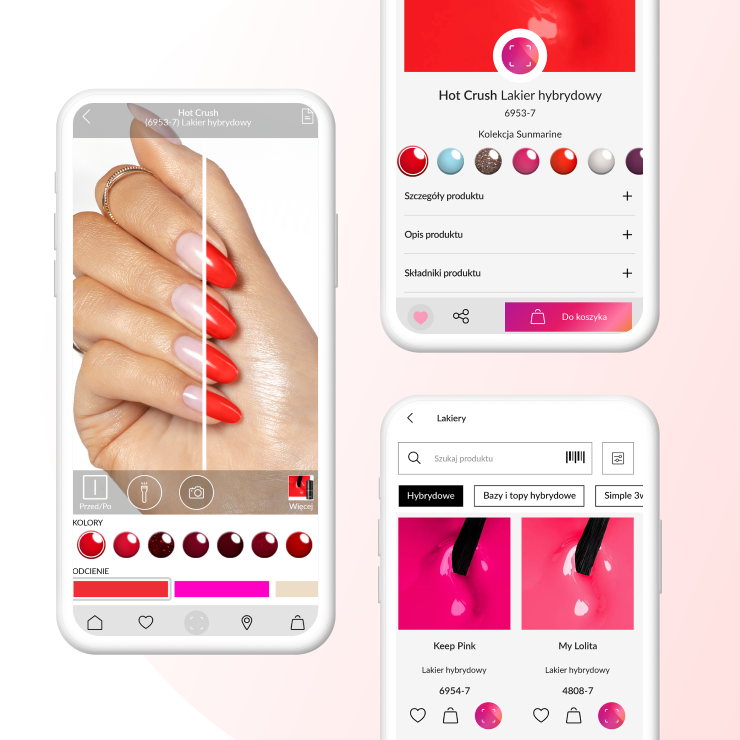

NEONAIL, a beauty mobile app with a Virtual Try-On feature

A cross-platform mobile app that lets users virtually try on nail products before purchasing them

Technologies: C++, Python, Kotlin, Swift, OpenCV, TensorFlow, Tensorflow Lite, React, Streamlit, Labelbox & V7, Neptune.ai

Platforms: iOS & Android

Client Expectations:

- Develop a virtual try-on app for manicures

- Provide a natural and lifelike 3D effect of colored nails in real-time

- Design a flawless UX leading to the e-commerce platform, enabling users to seamlessly test and purchase products

- Deliver the app in both iOS & Android through a cross-platform approach

Result of the partnership: After launching the app, NEONAIL users could enjoy trying on various nail colors, shapes, and patterns in real time with a seamless experience where they can purchase products they like.

Temi, a robot assistant you can control with a mobile app

A home robot powered by an Android-based operating system and a cross-platform mobile app to command it

Technologies: Swift, WebRTC, Dialogflow, WebSocket, Sinch

Platforms: iOS & Android

Client Expectations:

- Design and build a premium and affordable device that would secure them the pole position in the home robotics market

- Develop the robot’s Android-based operating system

- Create an iOS app and an Android app for controlling the robot

Result of the partnership: From facial and object recognition to voice ID and emotional detection, Temi offers state-of-the-art experience in taking care of errands and automating simple tasks to help users focus more on things that matter and stay connected with loved ones.

Our partners about the cooperation with Netguru

- I've really appreciated the flexibility and breadth of experience we've been able to tap from the Netguru team. While most of our work together has been in React Native, at times when needed we've also gotten support in QA, design, UX, iOS and Android as well.

- The difference between Netguru and other companies with which we have worked so far is that Netguru is good at taking the ownership. I appreciate that approach a lot, because what we need is to ask for an outcome and have someone deliver it within a given timeline, without us requiring to try to help in every step along the way. That means we’re not adding management overhead on our side

- Working with the Netguru Team was an amazing experience. They have been very responsive and flexible. We definitely increased the pace of development. We’re now releasing many more features than we used to before we started the co-operation with Netguru.

Netguru in numbers

70+

Product Designers

40+

Frontend Developers

120+

Backend Developers

40+

Quality Assurance Specialists

Answering your mobile development queries (FAQ)

How much does it cost to build an app?

It’s not possible to quote a single monetary amount. Building a mobile app depends on many factors, from scope to budget. To estimate costs, request a project estimation.

For example, if you want both an iOS or Android app, cross-platform development requires one team of specialists for both platforms.

If you’d prefer native development, you’ll need two teams of mobile app developers. The latter is more expensive. Don’t worry if you’re not sure: Our experts are on hand to advise and guide you.

What team do you need to build an app?

Various professionals in their field come together to build a successful mobile app.

Netguru mobile app development team is divided into iOS, Android, React Native and Flutter teams, spanning more than 80 experienced engineers.

Netguru also utilizes mobile managers, tech leads, and app designers and developers. Plus, project managers, UX/UI designers, QAs, backend developers, machine learning engineers. Overall, it hinges on the scope of the project and the client needs.

Which software is best for mobile app development?

It depends on the mobile app you’re developing, your preferences, and the advice you receive from experts like ourselves – we’re here to help.

Choosing the right technology is crucial, be it a platform-specific native app (Swift and Objective-C for iOS and Kotlin or Java for Android are the languages used here), a cross-platform app (React Native and Flutter are common here), or a hybrid app.

How do you manage the mobile app development process?

We’ll assign a Project Manager to manage the app development team. The PM will report back to you throughout the process, ensuring seamless communication and cooperation with the team of experts putting your app together. We’ll also put Quality Assurance and DevOps experts on the project from day 1 to make sure your app is perfect, from back-end to front-end.

How long does it take to build a mobile app?

That depends on the complexity of your app and the structure and stage of your project. Each stage takes a different amount of time, so if you’ve already completed some stages, such as writing the project brief and conducting research, it’ll take less time. It can take anywhere between three and nine months to go from idea to launch, but with a team of full-stack developers and experts we can make sure you lose no time in getting your mobile app to market.

How can an enterprise mobile application stay secure?

Data security is more important and harder to implement than ever. However, we continuously upgrade and share our security skills and would never release a product we consider insecure. Security audits are one way we keep on top of any potential weaknesses, but we also follow external guidelines, such as OWASP’s ASVS, OTG or MSTG. Before we begin the mobile app development process, we’ll make sure processes are in place to enforce all of the above and guarantee that your product it secure before it goes to market

Curious whether Netguru is the right fit for your project?

Looking for mobile app development services?

Before we start, we would like to better understand your needs. We'll review your application and schedule a free estimation call.

They trusted us

Click for the details

Sorry, our forms might not work