Top Android Accessibility Tools Available to Users

Starting with small accessories like dynamically increasing font size, forcing elements to be drawn bigger, or just providing users with an in-built magnification glass. Android supports libraries and the introduction of Jetpack Compose, and each new version supports more and more out-of-the-box solutions.

Right now, in most cases, developers only need to make sure that navigation with the keyboard follows the intended focus chain, and OS extensions should take care of the rest. So today, we will focus on the tools provided within the Android ecosystem and other more known external solutions.

TalkBack

The biggest and most advanced accessibility system available today in the Android system is TalkBack. With this option turned on, the device reads out every element displayed currently on the screen. If the developer included a label or accessibility description to the component, it can also describe icons.

The user is able to navigate through the screen with swipes, swipe left to move to the next component, and swipe right to get back to the previous component. If you hear the component that you are looking for, double tap the screen to perform an action assigned to a given item.

TalkBack in version 13 comes with two braille features. The first one allows the user to turn the smartphone into a braille keyboard by enabling it in the accessibility options.

At any given time, the user can switch from the pre-installed keyboard to a special input mode that turns the screen into a braille character detection device. The second one is the onboard support for the braille display, the Bluetooth device that allows the user to read content displayed on the screen in braille.

Source: The Android braille keyboard, Google

Source: The Android braille keyboard, Google

Right now, Google is working on an even broader descriptive image tool and the project is called Lookout. With the power of machine learning, Android will be able to do an even better job describing pictures. Right now, developers can only do a short, static description of the image, but Lookout will be able to describe the content presented on the screen.

Voice access

With this feature, the user is able to perform every action with a voice command. Tell the device, “click back button,” and the action will be performed. Tell the device “show labels” and accessibility labels provided by the developer will be presented on the screen and you can just tell the device to interact with a provided icon name. Applications with more complicated interactions will be told to “display the grid” to perform more precise clicks.

Switch access

For switch access, users are required to provide their own external device to connect it with the smartphone either via USB or Bluetooth. There are numerous variations of the devices available on the market:

Source: Switch Access for Android on YouTube, Google

Switches allow users to move through the screen elements by pressing one switch, and accepting the selection with another one. With all of these methods of moving on the screen, developers need to remember to test all applications for the correct sequence of focus changes of the elements. If the forms edit texts change focus in unpredictable ways, it will generate a lot of frustration for the users.

Live Captions and Live Transcribe

Right now, Android allows users to enable live, automatically generated captions for all spoken content happening on the screen. Unfortunately, so far, the supported languages are only English, French, German, Italian, Japanese (beta), and Spanish.

For these languages, for example, a user can watch a show on YouTube that has subtitles and find out that this content creator does live streams. They can then watch the live stream without worry, because the live captions will automatically display at the bottom of the screen in a toast.

Live Transcribe works wonderfully in all languages. It can switch seamlessly between spoken languages and provide the user with a properly sectioned conversation.

Sound notifications

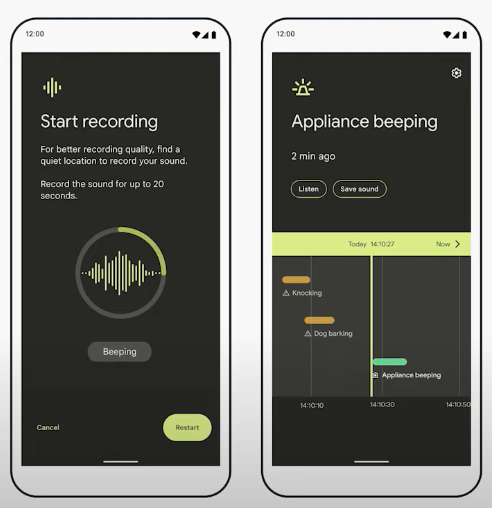

A great feature for people with hearing impairments is definitely the sound notifications. While launched in the background, it listens to general sounds that should alert the user. Door knocking, microwave beeps, a fire alarm, and even barking dogs can all be transcribed into a haptic notification.

The user will be prompted with a flashing flashlight, a text message with the description of the event, and a vibration. Afterward, the event that happened near the user will be presented on the timeline with proper timestamps.

Source: What’s new in Android accessibility, Android Developers

Source: What’s new in Android accessibility, Android Developers

Remaining features

Android comes packaged with additional features that do provide smaller yet impactful changes to the system’s behavior.

There are multiple choices to force applications to be more accessible. For starters, users can force set the font size used by all text views. It can be tricky because not all developers create responsive UI elements, and some content can be lost in the process. This operates similarly to the display size, but the difference is that not only texts but also all other elements are drawn slightly larger.

Smaller changes can be done through forcing text to use bold font style, or switching to high-contrast texts. The first one applies bold on all displayed texts, and the second one removes alphas from text colors, making it easier to read.

If the user requires occasional help with reading magnification and Select to Speak, they are tools that are displayed on the screen in an accessibility shortcut bubble displayed over the content.

With activation, users can use the built-in magnifying glass to briefly zoom in on the current content of the screen. Select to Speak allows the user to manually select the portion of the text that should be read out by the Android system. It does not require presetting of the language – it can seamlessly switch between them.

For color blindness adjustments, users can choose from color correction and color inversion. Available correction modes are deuteranomaly (red-green), protanomaly (red-green), tritanomaly (blue-yellow), and grayscale.

Not all features are as big as the ones we have mentioned, but they can still be a great help for people so they should also be mentioned:

- Remove animations

- Large mouse cursor

- Accessibility menu – bottom sheet dialog for quick access to frequently used OS functions

- Timing controls

1. Touch and hold delay – change the time of the switch between short and long press

2. Time to take action – change visibility times of a message with an action.

3. Autoclick – external mouse cursor hover will auto click an item after a delay. - One-handed mode – moves the top of the screen to the middle of the screen for easier reach with your thumb

- Sound amplifier – while wearing headphones amplifies low sounds heard by the device

- Hearing aids – connects the device to hearing aids

- Audio adjustment – balances audio output between left and right output

- Support for USB/Bluetooth connected devices like keyboard and mouse

Using Android accessibility tools

Accessibility becomes an essential part of digital design. There are different tools and features that make Android solutions more accessible. TalkBack, voice access or sound notifaction are just a few examples of accessibility tools available to users that experience various types of impairments. In this way, Android tries to ensure that their phones and apps can be used by everyone.