The 8 Best Data Engineering Companies in 2026 & How to Pick One

Contents

The global data engineering market is projected to grow from $95.4 billion in 2024 to $167.8 billion by 2029, with a CAGR of 11.9%. Businesses now receive data from diverse sources, making it essential to partner with skilled data engineering providers to extract actionable insights.

This guide highlights the top data engineering companies for 2026, offering firm comparisons and key criteria to help you select the right partner. Choosing the right partner from among many data engineering service providers requires reviewing multiple factors beyond technical capabilities. We analyzed each company based on its service portfolio, industry expertise, client success metrics, technology stack depth, and scalability options.

Our selection highlights firms with proven success in building robust data infrastructure. Each company has delivered measurable results for clients ranging from startups to enterprises. We prioritized providers offering comprehensive solutions, from data architecture design to ongoing maintenance and optimization.The companies listed span different specializations.

Some excel in cloud-native data platforms. Others bring strength in legacy system modernization or industry-specific compliance requirements. Pricing models vary substantially, ranging from $50 per hour for boutique consultancies to enterprise-level contracts exceeding $200 per hour for global firms.

We evaluated technical proficiency across major platforms like AWS, Azure, Google Cloud, Snowflake, and Databricks. Certifications matter, as do partnerships with major technology vendors. The best data engineering companies maintain current certifications and invest in continuous training for their teams.Client testimonials and case studies provided significant insights into ground performance. We looked for specific metrics: data processing speed improvements, cost reductions in infrastructure spending, and time saved through automation. Companies that shared transparent success stories with quantifiable outcomes ranked higher in our assessment.

Geographic presence also factored into our evaluation. Remote collaboration has become standard, but having local teams or offices in key markets can aid communication and ensure compliance with regional data regulations. Some businesses prefer working with providers in similar time zones for immediate collaboration.Each profile has core services, technology platforms, and specific reasons to consider that provider. We structured the information to help you compare options quickly. Pricing ranges, team sizes, and founding years offer additional context for your decision-making process.The comparison table at the end summarizes key differentiators across all nine providers and makes it easier to shortlist candidates based on your specific requirements.

Netguru

Netguru operates as a software development and data engineering firm based in Poznań, Poland. The company was founded in 2008 and employs between 250 and 999 professionals. It charges between $50 and $99 per hour. Netguru has delivered more than 1,000 projects for clients worldwide over 15 years in business. The firm holds Certified B Corporation status and is committed to using business practices that create positive social and environmental impact.

Core Data Engineering Services

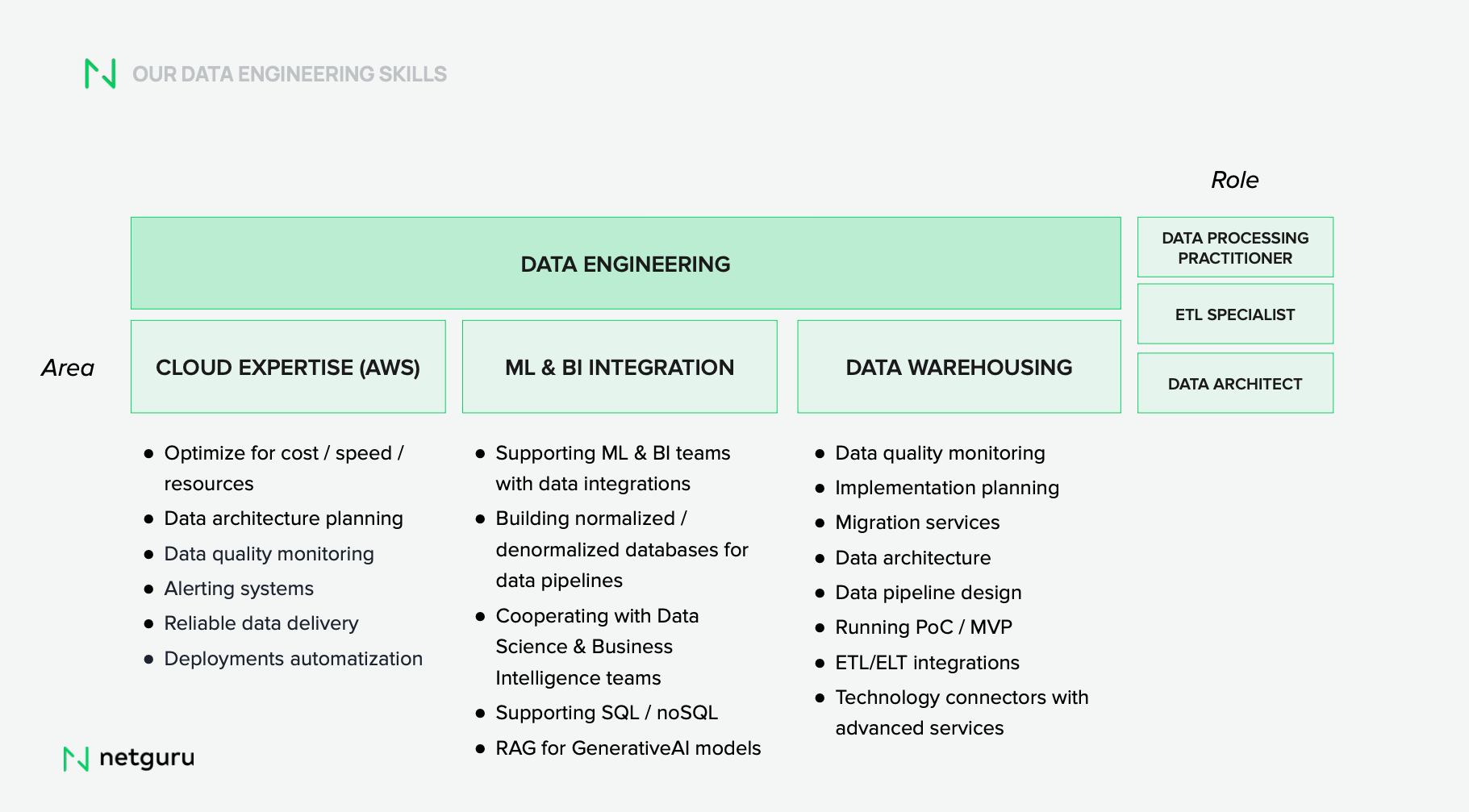

Netguru's data engineering practice centers on building systems for data ingestion, collection, storage, and analysis. The team creates advanced data pipelines that allow businesses to extract insights without manual data handling complexities. Their work involves developing sophisticated data processing systems and ensuring high standards of data quality and availability.

The company's team has specialists across four core areas:

- Data Architects design the overall blueprint for data management and provide strategic guidance on collection, integration, validation, and storage while implementing DataOps practices with automated infrastructure.

- ETL Specialists handle data movement between systems and support migrations from legacy databases to modern warehouses while building DAGs with scheduled workflows

- Warehouse Specialists optimize storage and access in modern platforms and manage daily operations that include data marts creation and ELT flow orchestration.

- Data Streaming Specialists enable processing in real time by building resilient streaming analytics systems using cloud-native or serverless architecture for high-velocity data streams.

Netguru's engineers build data pipelines for smooth transfer between systems, handle transformation tasks, and ensure data remains consistently available and properly formatted for analytical processes. Their work integrates disparate data systems and supports evidence-based decision-making across organizations.

Key Technologies and Platforms

The firm works with major cloud platforms that include AWS, Azure, and Google Cloud for scalable resources and model management. Netguru uses TensorFlow and PyTorch for building and training AI models when it comes to machine learning capabilities. Natural language processing projects employ the NLTK and spaCy libraries for text data processing and analysis. The technology stack also has Keras for neural network development and Scikit-learn for various AI tasks.

Netguru is a Certified Partner with AWS, Microsoft, and Google Cloud.

Why Choose Netguru?

Client testimonials highlight the company's delivery speed and quality. One client noted, "Excellence and speed. It's rare to get both, and Netguru delivers". Another stated, "Netguru has been the best agency we've worked with so far".

To cite an instance, Netguru developed an AI-powered solution for Newzip, a real estate-as-a-service platform, creating hyper-individual-specific user experiences. This implementation resulted in a 60% increase in user engagement and a 10% boost in conversions. The project demonstrates Netguru's ability to deliver measurable business outcomes through data engineering and AI integration.

The company's agile approach focuses on user experience. Teams design and develop applications tailored to each client's needs. Their communication practices employ platforms like Slack and Google Meet to maintain strong client-team connections.

Kanerika Inc.

Kanerika Inc. has become an emerging leader among data engineering service providers. The company specializes in AI-driven solutions for mid-sized and enterprise organizations. It holds CMMI Level 3, ISO 27001, ISO 27701, and SOC 2 Type II certifications that guarantee data security and compliance throughout project lifecycles. Kanerika is a certified Microsoft Data & AI Solutions Partner and a Databricks partner. The company combines technical expertise with proven methodologies to deliver measurable business outcomes.

Core Data Engineering Services

Kanerika builds data foundations that support analytics and AI initiatives. The team creates reliable pipelines connecting multiple sources and maintains data quality standards. Their solutions handle both structured and unstructured data. This enables up-to-the-minute processing for business-critical decisions.The company's approach focuses on modern architectures like data lakes and lakehouses. Platforms include Databricks and Microsoft Fabric.

Their engineers develop ETL workflows, streaming pipelines, and expandable storage solutions that support advanced analytics and machine learning. FLIP is Kanerika's proprietary zero-code DataOps platform. Business users can manage pipelines without deep technical knowledge. This platform integrates with cloud environments such as Azure and AWS smoothly.

Key Technologies and Platforms

The technology stack spans Microsoft Fabric, Azure, AWS, Power BI, Databricks, Snowflake, Talend, and Alteryx. Kanerika's partnerships with Microsoft and Databricks provide access to enterprise-grade solutions while maintaining cost efficiency. The company's IMPACT methodology drives successful projects. It focuses on tangible outcomes and lines up automation with business goals.

Why Choose Kanerika?

One healthcare provider partnered with Kanerika to address fragmented clinical data and manual reporting processes. The implementation reduced data processing time by over 60% and improved regulatory compliance.

Databricks

Founded by the creators of Apache Spark, Databricks offers a unified data and AI platform built on lakehouse architecture. The Databricks Data Intelligence Platform combines data engineering, analytics, and machine learning workloads in a single environment. It eliminates traditional silos between data lakes and warehouses.

Core Data Engineering Services

Lakeflow is Databricks' end-to-end data engineering solution. This unified framework has three integrated components that handle ingestion, transformation, and coordination.

Lakeflow Connect simplifies data ingestion through connectors for enterprise applications, databases, cloud storage, message busses and local files. Managed connectors provide configuration-based ingestion with minimal operational overhead. Standard connectors let you access a wider range of data sources. Key Technologies and Platforms

Apache Spark powers the platform's distributed processing engine. Databricks Runtime has Photon, a high-performance vectorized query engine, along with autoscaling infrastructure optimizations. The platform supports Python, SQL, Scala, and R programming languages. Delta Lake brings ACID transactions to data lakes and ensures consistency and reliability. Unity Catalog provides unified governance across all data assets with fine-grained access controls and automated lineage tracking.

Why Choose Databricks?

Hinge Health achieved 50% cost reduction while managing 10x data growth. The platform's automatic optimization delivers record-setting performance for both data warehousing and AI use cases. Organizations gain a single toolset for batch and streaming workloads across major cloud providers.

Accenture Data & AI

Accenture Data & AI operates as a global professional services division with FY23 revenue reaching USD 64.10 billion. The firm has delivered over 100 Data and AI projects in industries of all types and built expertise in helping organizations transform raw data into strategic assets that drive reinvention and growth.

Core Data Engineering Services

The firm implements six foundational data practices to unlock value. These practices include extricating data from functional silos, extending data mindsets across cloud environments, productizing data with proven development practices, and automating through data-as-code approaches. They also democratize access to high-quality data products and publish data naturally across ecosystems. Data engineers at Accenture build and maintain ETL pipelines using Informatica PowerCenter, Teradata, and AWS services that include S3, RDS, and Lambda.

Key Technologies and Platforms

Accenture maintains partnerships with Oracle and leverages Oracle Data Platform's detailed suite covering transactional databases, data lakes, warehouses, and AI/ML services on Oracle Cloud Infrastructure. Engineers work with Databricks and AWS services such as Redshift, Glue, Lambda, Step Functions, and CloudWatch. MongoDB Atlas powers semantic search solutions with vector database capabilities. The technology stack also has BMC HelixGPT for incident prediction and resolution, plus Cloudera Data Platform for edge device data management.

Why Choose Accenture

Organizations working with Accenture achieve measurable outcomes: up to 50% cost savings, 40% faster time to market, and 75% reduction in processing times. AI and machine learning proof-of-concept development accelerates up to 15 times while reducing failure rates. Evidence-based companies using their services report 10-15% more revenue growth than peers.

Snowflake

Snowflake's AI Data Cloud represents a fully managed platform where data engineering, analytics, and AI workloads meet without the infrastructure burden traditional systems just need. Leading enterprises rely on this cloud-native architecture to power end-to-end data lifecycles, from ingestion through sharing. This enables faster innovation. The platform operates across AWS, Azure, and Google Cloud with similar functionality and supports continuous multi-cloud and cross-region operations.

Core Data Engineering Services

Data ingestion flows through multiple pathways. The COPY INTO command loads data from files to tables. Snowpipe ingests data as files arrive in staging areas. Snowpipe Streaming continuously loads row-level data into Snowflake tables using SDKs or REST APIs for low-latency requirements. OpenFlow connectors built on Apache NiFi handle sources like Microsoft SharePoint and Google Drive.

Transformation capabilities span several approaches. Dynamic tables refresh based on target freshness and transformation queries. Streams capture changes to base objects. Tasks execute transformation logic on schedules. Snowpark enables complex transformations using Python, Java, and Scala. DBT integration supports SQL-based transformation frameworks.

Key Technologies and Platforms

Snowflake Cortex provides access to large language models and AI-powered functions. Snowpark ML allows users to build, train, and deploy custom machine learning models using Python while keeping data secure and integrated. The platform's three-layer architecture has cloud services for metadata management and security, a compute layer with independent virtual warehouses using massively parallel processing, and a storage layer with compression and optimization.

Why Choose Snowflake?

Organizations transitioning to Snowflake achieve 20% increase in business value through reduced project timelines and faster data sharing. Cloud migration eliminates hardware and maintenance costs while aligning spending with actual usage. Snowflake serves over 6,300 customers worldwide with a SaaS net retention rate near 174%. The Snowflake Marketplace offers data, data services, and native applications from leading companies. This creates monetization opportunities.

Informatica

Informatica delivers enterprise-grade data management through its Intelligent Data Management Cloud (IDMC), an AI-powered platform that simplifies data access and automates labor-intensive tasks in hybrid and multi-cloud environments. The platform runs on CLAIRE, an AI-driven engine that provides intelligent recommendations and automation capabilities. Gartner has named Informatica a Leader in the Magic Quadrant for Data Integration Tools for 16 consecutive years, and the company maintains its position among the top data engineering firms worldwide.

Core Data Engineering Services

IDMC provides six foundational capabilities for data engineering workflows. Data integration and mass ingestion ensure AI systems access diverse, voluminous datasets through efficient consolidation across scenarios. The platform supports both ETL and ELT processes with advanced pushdown optimization, delivering faster and more affordable integration.

Key Technologies and Platforms

Informatica offers over 300 pre-built connectors for enterprise data sources. PowerCenter, launched in 1993, remains commonly used for building data warehouses. The platform supports serverless deployment, advanced cluster configurations, and APDO execution modes with automatic resource provisioning and scaling. Consumption-based pricing through Informatica Processing Units allows organizations to choose cloud services as requirements change.

Why Choose Informatica?

Organizations achieve 328% average ROI with Cloud Data Integration while reducing the total cost of ownership by 65%. Development time decreases by 80% through AI-powered, low-code, and no-code tools. Advanced compliance and data governance capabilities make Informatica preferred for heavily regulated industries.

TCS (Tata Consultancy Services)

TCS operates across 55 countries with over 607,979 consultants and ranks among the largest data engineering firms worldwide. The company's data and AI practice centers on building AI-ready data ecosystems that enable organizations to extract value from structured and unstructured information.

Core Data Engineering Services

Intelligent Data as a Service (iDaaS) represents TCS's unified data solution that processes internal and external data about customers, domains, products, and transactions, plus immediate data from sensors, devices, and apps. This platform creates domain-rich data environments with a focus on data quality, lineage, security, and compliance. Ready-to-configure analytical models powered by AI and ML speed up contextual business intelligence. Organizations can monetize data through an embedded marketplace and make evidence-based decisions that improve customer experience and organizational efficiency.

Key Technologies and Platforms

TCS maintains strategic collaborations with Databricks, Informatica (25 years), Snowflake (since 2018), and NVIDIA. The technology stack spans Python, SQL, PySpark, Spark, Azure, AWS, and Google Cloud platforms. DataOps implementation uses StreamSets products, including Control Hub, Data Collector, and Data Transformer.

Why Choose TCS?

TCS holds multiple recognitions: Google Cloud Partner of the Year for AI, Data & Analytics, and Financial Services, plus Databricks Delivery Excellence Partner of the Year.

Cognizant

Cognizant earned recognition as Snowflake's Global Data Cloud Services Implementation Partner of the Year in 2025. The award celebrates excellence in delivering global-scale implementations and driving AI-ready transformation. This partnership milestone reflects a five-year strategic relationship that includes joint innovations and platform co-development.

Core Data Engineering Services

Cognizant's Data Modernization Method provides the quickest way to architect modern data backbones. You retain control while maintaining ethical governance and privacy compliance. The approach reduces the total cost of data by 40% and accelerates time to market by 30-40% through AI-enabled data engineering.

Key Technologies and Platforms

Cognizant partners with Snowflake as a launch partner for Snowflake Openflow. The platform supports batch and streaming data movement for structured and unstructured formats. Cognizant Skygrade provides AI-increased FinOps management that correlates utilization insights with cloud spend data.

Why Choose Cognizant?

Xerox's Chief Digital Officer noted that Cognizant and Snowflake brought deep expertise and clear roadmaps. These simplify complexity and accelerate compliance.

How to Choose the Right Data Engineering Company for Your Business?

Assess Industry Experience and Domain Expertise

Choose a company with proven experience in your business domain. Industry-specific know-how means the partner understands your data sources, challenges, and compliance requirements. Finance, healthcare, and retail sectors require distinct data-handling approaches. Domain knowledge helps data engineers pull precise data points and make suggestions to get, aggregate, and utilize supplemental data.

Review Technology Stack and Cloud Certifications

The provider must work with technologies that line up with your organization's infrastructure. Look for expertise with modern tools such as AWS, Azure, GCP, Databricks, Snowflake, and Apache Spark. Check for credentials like AWS Certified Data Engineer or Microsoft Azure Data Engineer Associate. Cloud partnerships show technical proficiency and access to the latest breakthroughs.

Check Client Reviews and Success Stories

Review case studies and customer feedback. Past success stories indicate reliability and problem-solving skills. Client testimonials let you learn about project quality and timelines.

Think Over Adaptability and Support Models

Providers must offer flexible engagement models and adaptable services. Look for ongoing maintenance, performance monitoring, and 24/7 technical support. Adaptability means your data infrastructure grows with business needs without frequent overhauls.

Prioritize Data Security and Compliance

GDPR fines have topped USD 10 billion in the last year and a half. The average cost of a data breach hit USD 4.88 million in 2024 and is projected to reach USD 5 million in 2025. Verify compliance with GDPR, HIPAA, and CCPA. Security measures like encryption, access control, and auditing should be commonplace.

Comparison: The 9 Best Data Engineering Companies in 2026

| Company | Year Founded | Location | Team Size | Hourly Rate | Core Specialization | Key Technologies & Platforms | Certifications & Partnerships | Notable Achievements |

| Netguru | 2008 | Poznań, Poland | 250-999 | $50-$99/hr | Data pipelines, ETL/ELT, data streaming, warehouse optimization | AWS, Azure, Google Cloud, TensorFlow, PyTorch, NLTK, spaCy, Keras, Scikit-learn | Certified B Corporation, AWS, Gold Microsoft Partner, Google Cloud Partner, | 1,000+ projects delivered; 60% increase in user engagement for Newzip; 10% boost in conversions |

| Kanerika Inc. | Not mentioned | Not mentioned | Not mentioned | Not mentioned | AI-driven solutions, data migration, DataOps platform (FLIP) | Microsoft Fabric, Azure, AWS, Power BI, Databricks, Snowflake, Talend, Alteryx | CMMI Level 3, ISO 27001, ISO 27701, SOC 2 Type II; Microsoft Data & AI Solutions Partner; Databricks Partner | 80% automation in migration; 30% improvement in data processing; 40% reduction in operational costs; 60% reduction in data processing time for healthcare client |

| Databricks | Not mentioned | Not mentioned | Not mentioned | Not mentioned | Lakehouse architecture, unified data & AI platform, Lakeflow (ingestion, transformation, orchestration) | Apache Spark, Delta Lake, Unity Catalog, Photon, Python, SQL, Scala, R | Founded by Apache Spark creators | 85% faster development (Porsche); 99% reduction in pipeline latency (Volvo); 50% cost reduction with 10x data growth (Hinge Health) |

| Accenture Data & AI | Not mentioned | Global | Not mentioned | Not mentioned | Enterprise data transformation, ETL pipelines, AI maturity advancement | Oracle Data Platform, Informatica PowerCenter, Teradata, AWS (S3, RDS, Lambda, Redshift, Glue), Apache Kafka, Databricks, MongoDB Atlas, BMC HelixGPT, Cloudera | Oracle Partner | FY23 revenue: $64.10B; 100+ Data & AI projects; 50% cost savings; 40% faster time to market; 75% reduction in processing times; 15x faster AI POC development |

| Snowflake | Not mentioned | Not mentioned | Not mentioned | Not mentioned | Cloud-native data platform, AI Data Cloud, automated data ingestion (Snowpipe, Snowpipe Streaming) | AWS, Azure, Google Cloud, Snowflake Cortex, Snowpark ML, JSON, XML, Apache Avro, ORC, Parquet, Iceberg | Multi-cloud platform | 6,300+ customers; 174% net retention rate; 66% improvement in data engineering productivity; 13,000+ hours saved annually; 20% increase in business value |

| Informatica | 1993 (PowerCenter) | Not mentioned | Not mentioned | Consumption-based (IPU) | Intelligent Data Management Cloud (IDMC), ETL/ELT, data quality & governance | CLAIRE AI engine, PowerCenter, 300+ pre-built connectors, INFACore | Gartner Magic Quadrant Leader for Data Integration Tools (16 consecutive years) | 328% average ROI; 65% reduction in TCO; 80% reduction in development time |

| TCS | Not mentioned | 55 countries | 607,979+ consultants | Not mentioned | Intelligent Data as a Service (iDaaS), TCS Datom, TCS Dexam | Python, SQL, PySpark, Spark, Azure, AWS, Google Cloud, Databricks, Informatica, Snowflake, NVIDIA, StreamSets | Google Cloud Partner of the Year (AI, Data & Analytics, Financial Services); Databricks Delivery Excellence Partner of the Year | 25-year partnership with Informatica; Partnership with Snowflake since 2018 |

| Cognizant | Not mentioned | Not mentioned | Not mentioned | Not mentioned | Data Modernization Method, Intelligent Data Works, Cognizant Ignition | Snowflake, Snowflake Openflow, Cognizant Skygrade (FinOps) | Snowflake Global Data Cloud Services Implementation Partner of the Year 2025; Snowflake Openflow Launch Partner | 40% reduction in total cost of data; 30-40% faster time to market; 80% migration automation; 75% conversion automation; 50% cost savings for manufacturing client |

Conclusion

Choosing the right data engineering partner means evaluating your specific needs against each provider's strengths. The nine companies we've covered offer distinct advantages, from Netguru's budget-friendly agile approach and proven 60% engagement improvements to enterprise-scale solutions from Accenture and TCS.Identify your budget constraints and technical requirements first. Assess industry expertise and compliance needs next. Review client testimonials and success metrics that line up with your business goals. The right partner should deliver measurable ROI while building a flexible infrastructure that grows with your organization.Data engineering investments affect your competitive advantage, so choose wisely based on the criteria we've outlined.