🤖 MLguru #4: Self-supervised Grasping, Dragonfly, GAN Dissection, Skynet, and BigGAN

Learning object representations through self-supervised grasping

Self-supervised grasping is a method that relies on the simple insight that grasping an object removes it from a scene. It can be an image of the scene before grasping, after grasping, or an isolated view of the grasped object itself.

Embedding a representation of images should preserve: objects_before_grasp - objects_after_grasp = grasped_object. That equality is implemented through a fully convolutional architecture and metric learning via an N-Pairs objective. A trained network has two important properties:

-

Cosine distance between embeddings allows us to determine whether objects are similar or identical. The similarity metric between object embeddings is used as a reward for instance grasping and removes the need to manually label grasp outcomes.

-

The product of spatial feature maps and query object embedding produces a heatmap of regions similar to the query image. Read more

Dragonfly opposition

Dragonfly is a search engine prototyped by Google designed to be compatible with censorship in China. The public learnt about Dragonfly in August 2018 when an internal memo from Google was leaked. Google employees wrote an open letter in opposition to Dragonfly, saying “Our opposition to Dragonfly is not about China: we object to technologies that aid the powerful in oppressing the vulnerable, wherever they may be.”. It has been signed by 737 employees already. Read more

GAN Dissection

Dissection is a method for quantifying interpretability of individual units in a deep CNN measuring the alignment between the unit response and a set of concepts drawn from a broad and dense segmentation. The same neurons control a specific object class in a variety of contexts. The network understands when it can and cannot compose objects. For instance, turning on neurons for a door in the proper location of a building will add a door. However, doing the same in the sky will have no effect. Read more

Should we already care about Skynet?

There’s discussion in the UN about banning killer robots that use weapons without meaningful human involvement. None of the 88 countries participating in meetings objected to further pursuing talks on banning lethal autonomous weapons systems. It’s the time to recall Andrew Ng’s words: “Fearing a rise of killer robots is like worrying about overpopulation on Mars”. Read more

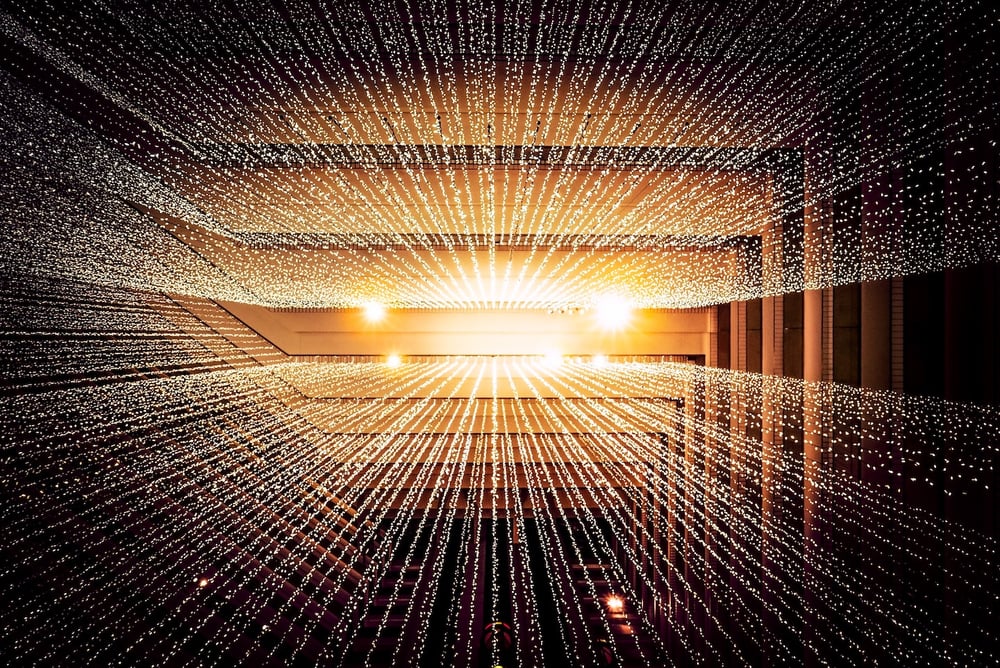

BigGAN - long may he reign

BigGAN has become the new state-of-the-art in Image Synthesis, beating the previous best-performing system by 100 points of the Inception Score. Its main contributions are:

-

Architectural changes that highly improve scalability of training

-

Orthogonal regularization to the generator that allows fine-grained control of the trade-off between sample variety and fidelity

-

Discovery of instabilities in training large scale GANs and a way of remedying them.

-450071-edited.jpg?width=50&height=50&name=Opala%20Mateusz%202%20(1)-450071-edited.jpg)

-450071-edited.jpg?width=240&height=240&name=Opala%20Mateusz%202%20(1)-450071-edited.jpg)